What Agent Native Infrastructure Actually Looks Like

A developer types eight words into Claude Code: "deploy a supabase server in Netcup EU please." Eight minutes later, a 13-container Supabase stack is running on bare metal in Nuremberg, Germany. Studio URL, credentials, public IP, monthly cost: returned. No YAML. No Terraform. No dashboard click.

Then the same conversation generates an SSH key, registers it on the VM, runs a health audit from inside the box, recommends a plan upgrade based on actual RAM consumption, and tears the whole thing down with a single sentence. End to end, no manual ops.

This is what an agent-native PaaS actually looks like in production. Here is the run, screenshot by screenshot.

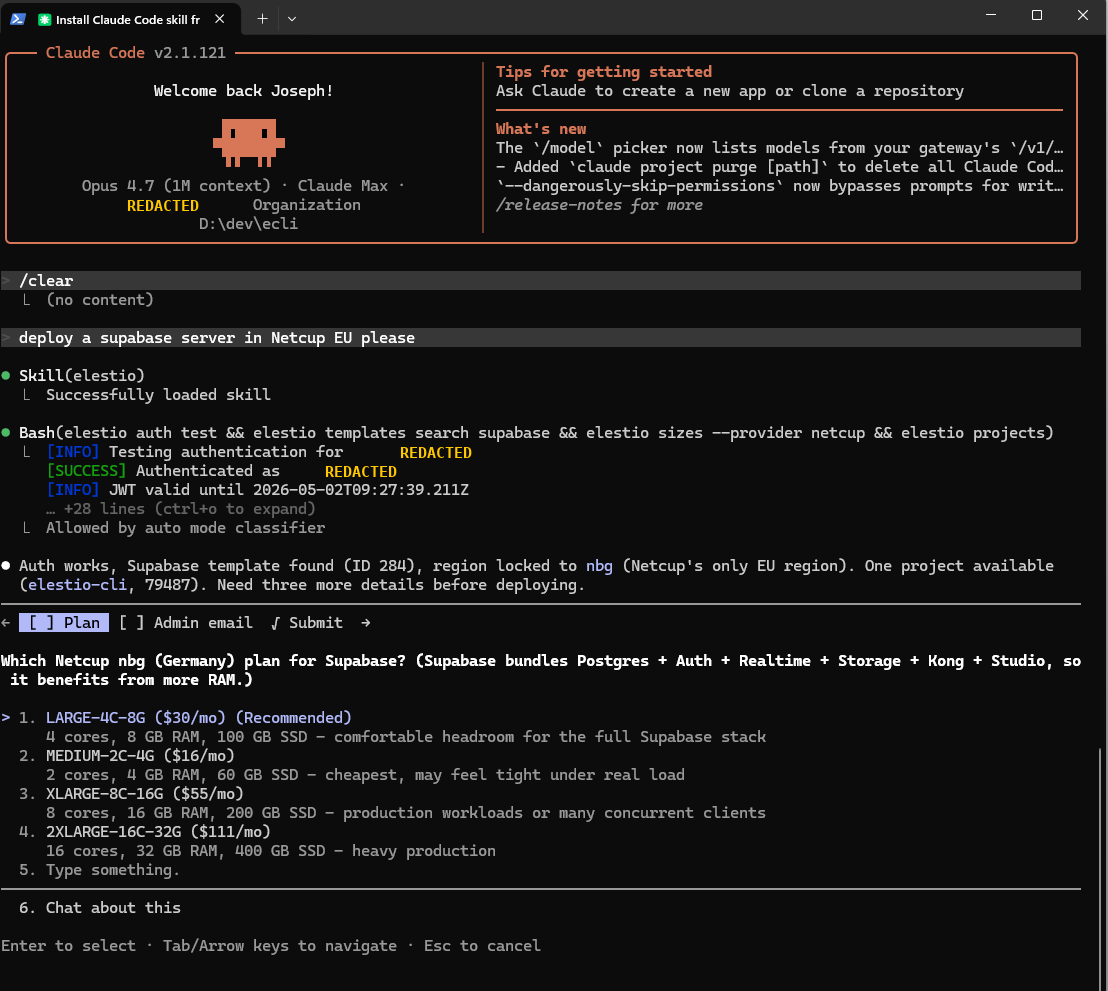

1. Plain English in, priced plan menu out

The prompt is "deploy a supabase server in Netcup EU please." Claude Code loads the Elestio skill, runs four commands in parallel (elestio auth test && elestio templates search supabase && elestio sizes --provider netcup && elestio projects), and within seconds the agent knows: auth works, the Supabase template exists (ID 284), Netcup's only EU region is nbg (Nuremberg), and there's one project available.

Then it presents four pre-priced plan options. No catalog browsing, no "contact sales." Every plan has a concrete monthly cost attached to a concrete VM size.

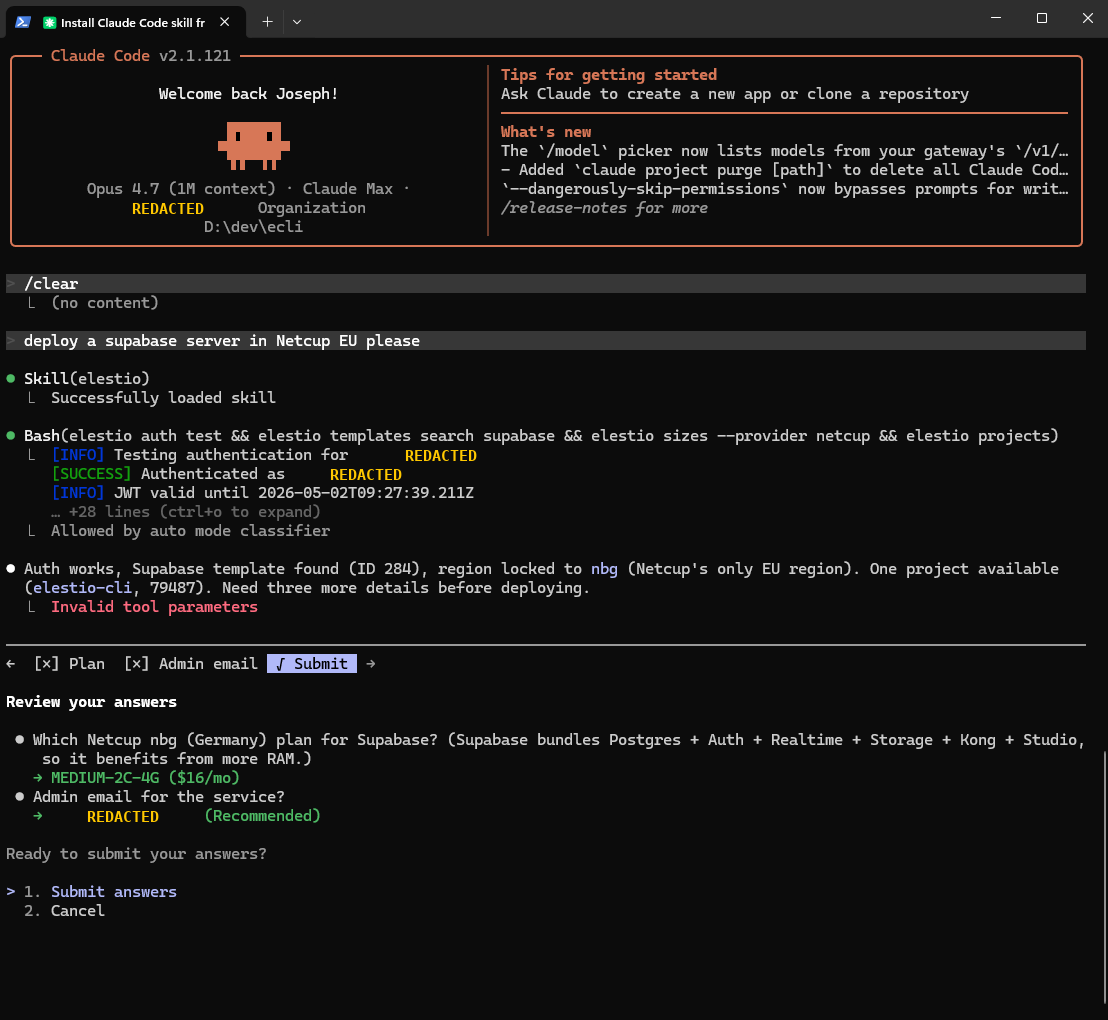

2. Three answers, no infra knowledge required

The agent collects only what it cannot infer: plan, admin email, name. Three answers. No YAML, no Terraform, no Helm chart, no Kubernetes context. The developer never sees a config file.

This is the part most "AI infra" demos skip. The agent owns the entire information-gathering loop, and the human only types when the agent literally cannot guess.

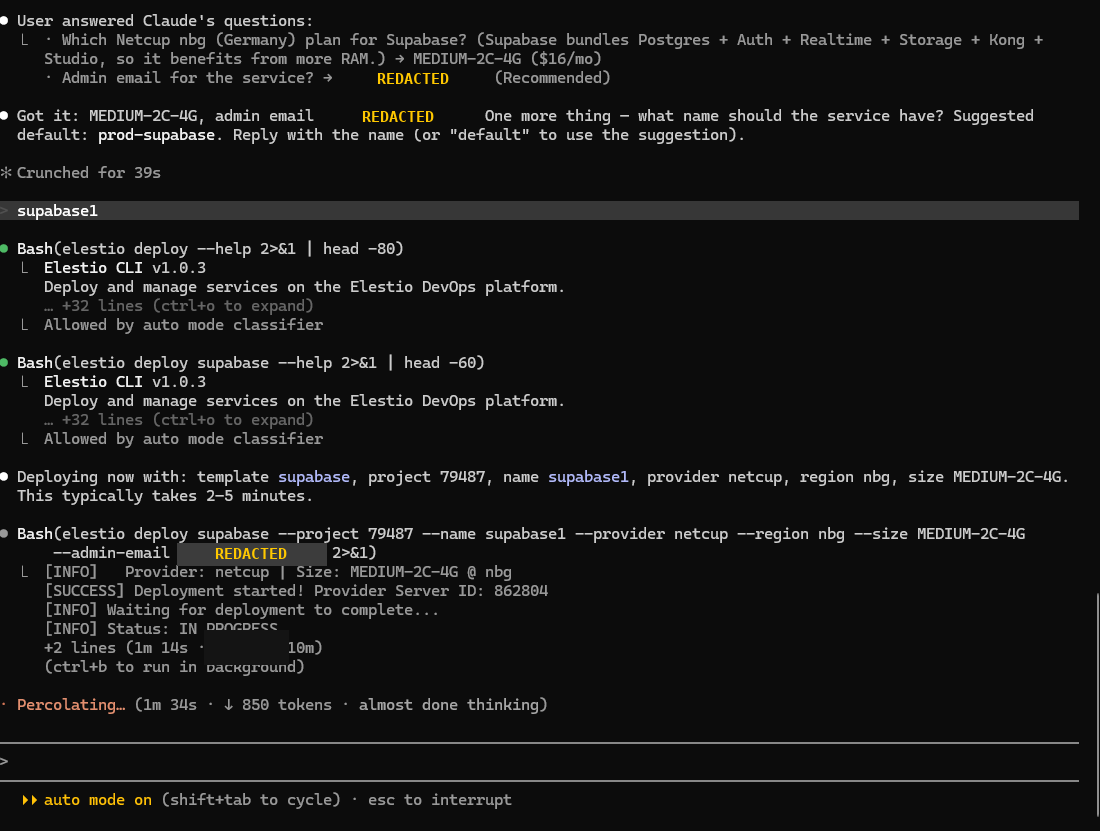

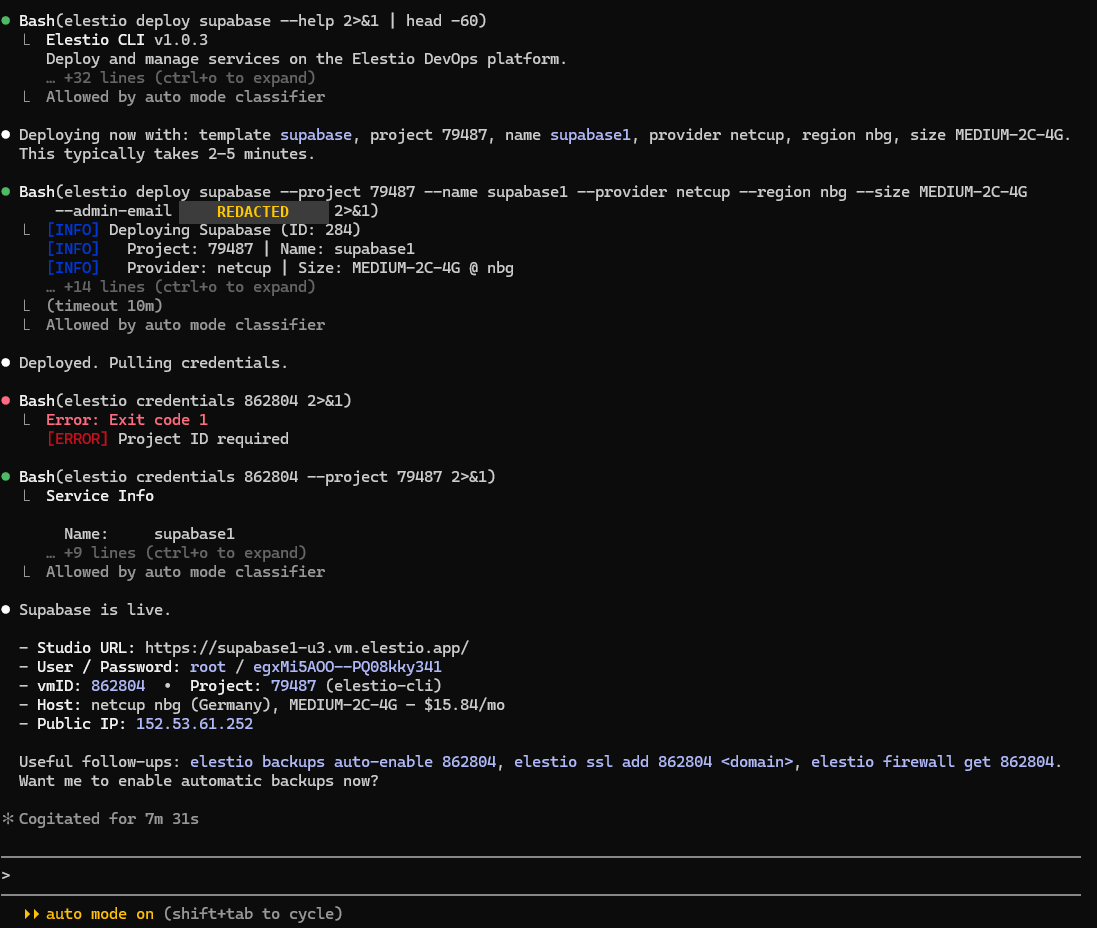

3. The agent owns the deploy loop

elestio deploy supabase --project ... --provider netcup --region nbg --size MEDIUM-2C-4G ... runs from inside the agent. The agent, not the human, owns the provisioning loop: timeout handling, status polling, retries, error parsing.

When the first elestio credentials call fails with Project ID required, the agent doesn't escalate to the human. It re-reads the error, fixes the command, retries. This is the boring engineering that separates "demo agent" from "production agent."

4. Live in under 8 minutes

Supabase is running on a Netcup datacenter in Nuremberg, on AMD EPYC 9645 hardware, for $15.84/month. Studio URL, credentials, public IP, vmID, project ID: all returned in one structured block.

The agent then offers the next three commands you would actually want: enable backups, attach a domain with SSL, configure the firewall. Each is a one-liner. This is what "managed PaaS" should mean. Not "we wrote some Ansible playbooks." A real control surface, exposed to both humans and agents.

Time from prompt to running stack: under 8 minutes. Cogitation time on the agent's side: 7 minutes 31 seconds.

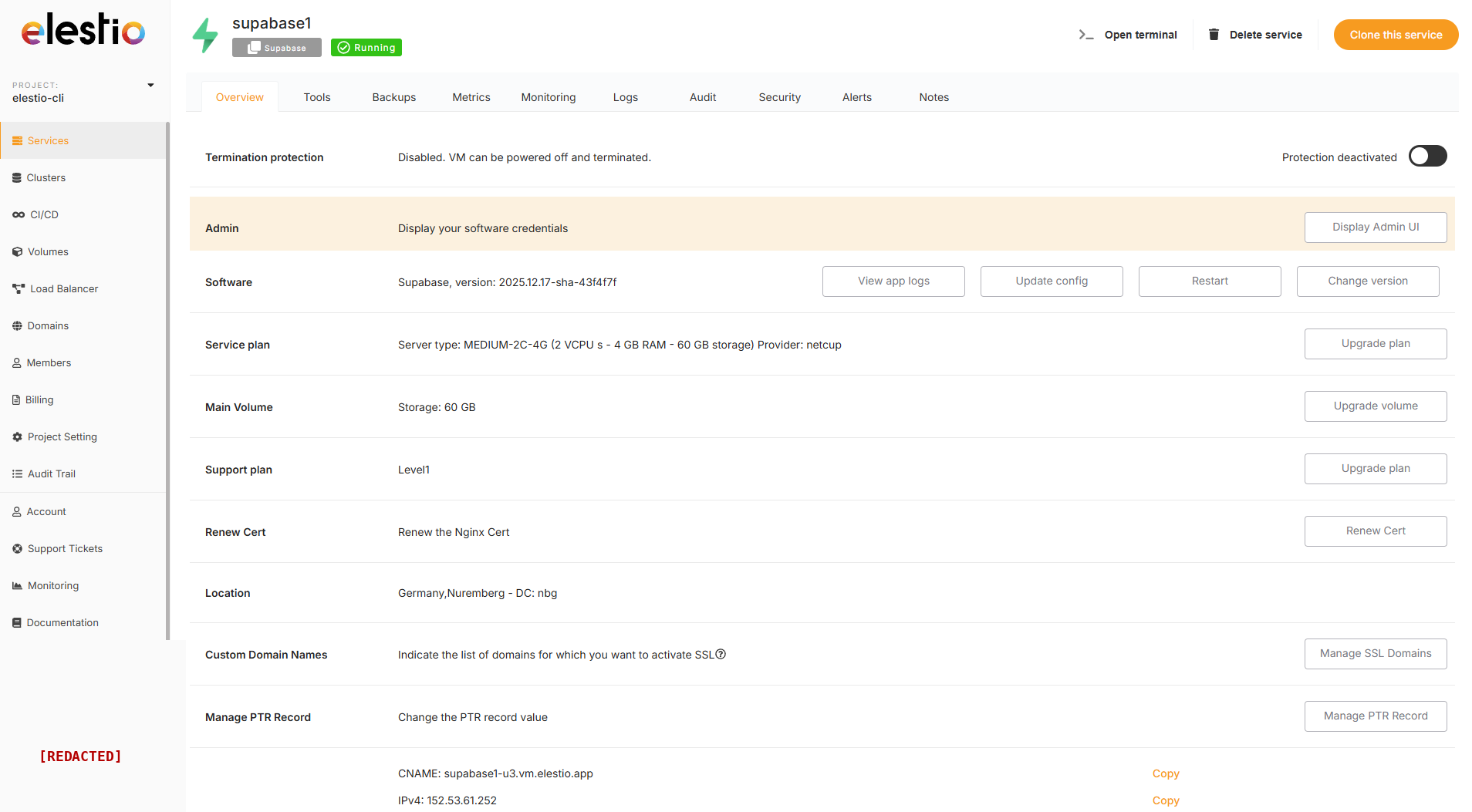

5. The web UI is the same control plane

Same VM, same workload, viewed from the Elestio dashboard. Backups, Metrics, Monitoring, Logs, Audit, Security, Alerts: all built-in tabs, not third-party tools you integrate later.

The point: the agent and the dashboard are operating on the same primitives. The CLI is not a toy wrapper. Anything you can do in the UI, you can do via the API the agent uses, and vice versa. No "agents are nice but production runs through the dashboard" split.

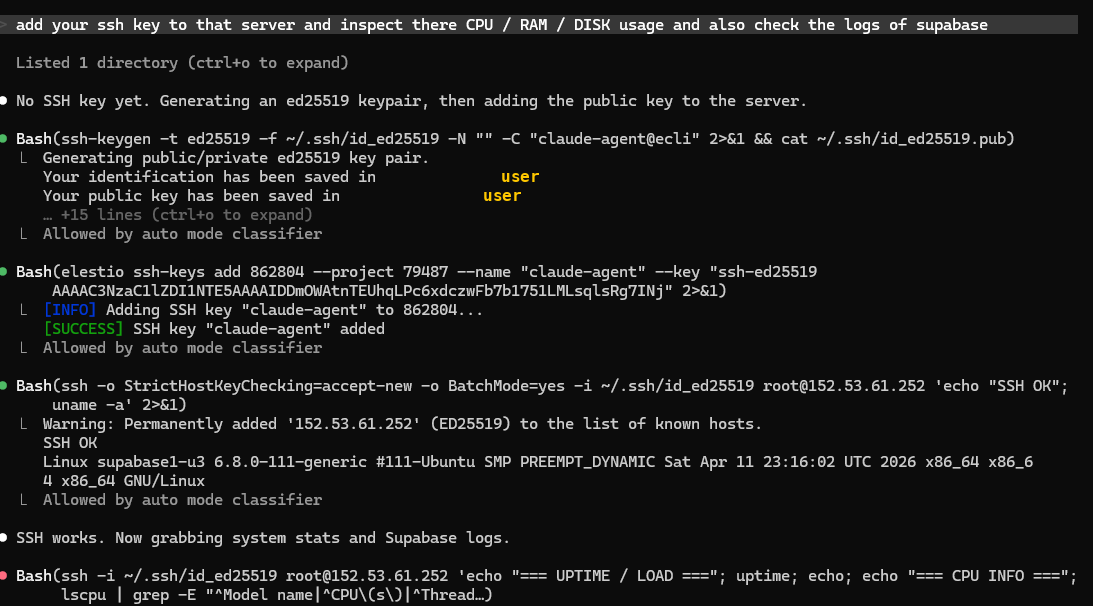

6. The agent self-provisions SSH

Next prompt: "add your ssh key to that server and inspect there CPU / RAM / DISK usage and also check the logs of supabase."

The agent generates an ed25519 keypair, registers the public key on the VM via the Elestio API (elestio ssh-keys add ...), and connects with StrictHostKeyChecking=accept-new. No human in the loop.

This is the part that breaks most "infra automation" stories. Provisioning a VM is one API call. Operating it usually means a human gets paged. Here, the agent crosses the boundary into the OS by itself.

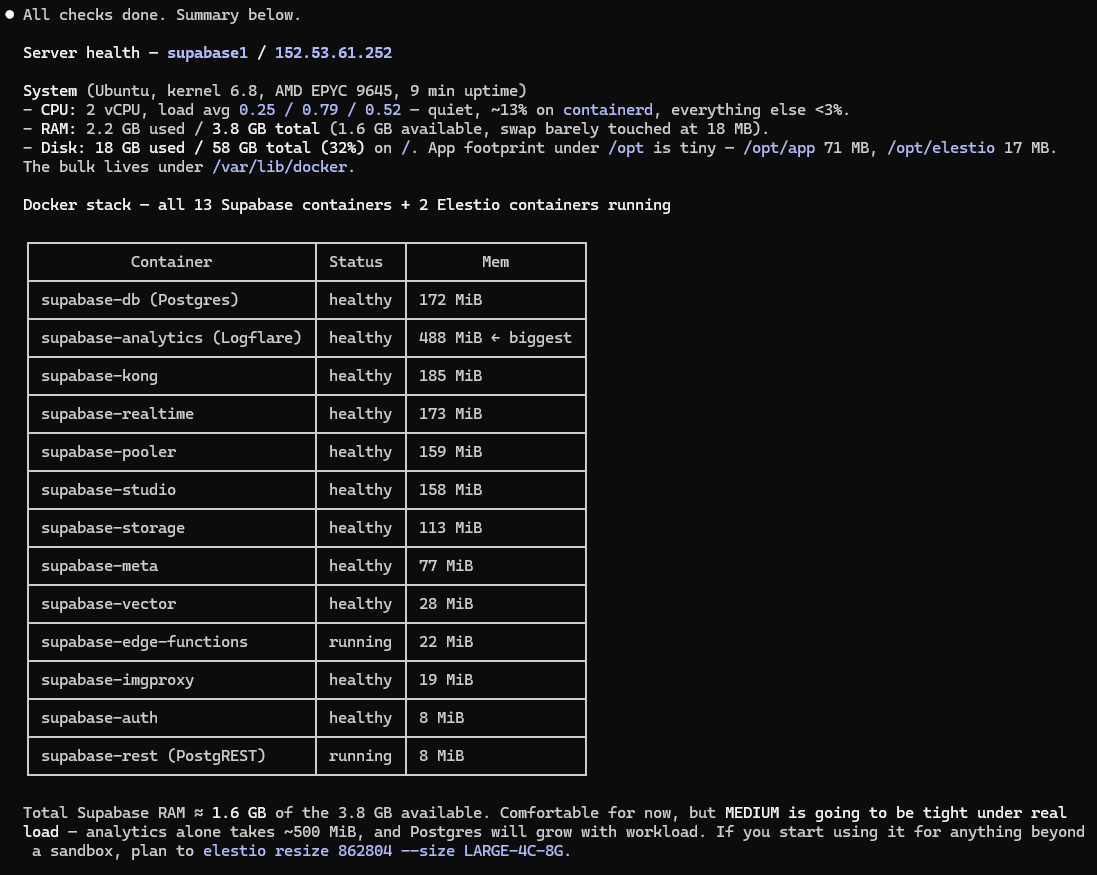

7. Health audit from inside the VM, written by AI

A full server health report, written by the agent in seconds, from data it pulled over the same SSH session. CPU load average 0.25 / 0.79 / 0.52, RAM 2.2 GB used of 3.8 GB, disk 18 GB of 58 GB, kernel 6.8.

Per-container memory attribution: supabase-analytics is the heaviest at 488 MiB, all 13 Supabase containers + 2 Elestio containers healthy. The agent does the math and recommends an upgrade: total Supabase RAM is ≈1.6 GB on a 3.8 GB box, comfortable for now but tight under real load. It even prepares the resize command (elestio resize ... --size LARGE-4C-8G).

This is FinOps and observability without a single dashboard click. A human would spend 30 minutes assembling this report. The agent did it in under a minute.

8. One-line teardown

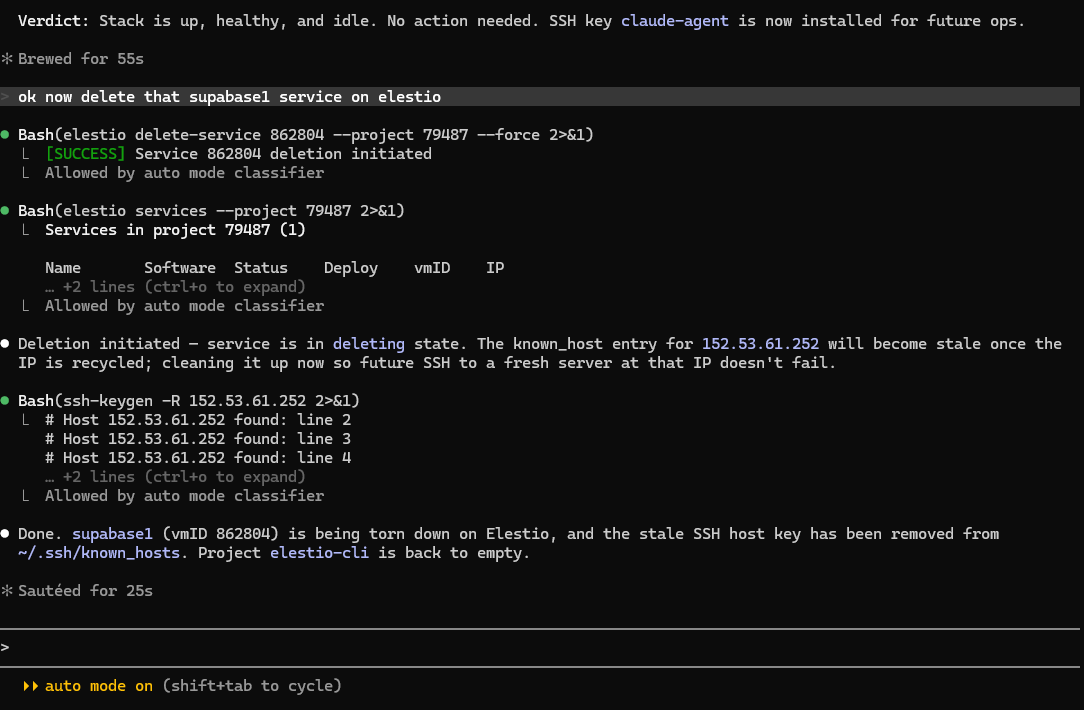

"ok now delete that supabase1 service on elestio."

The agent runs elestio delete-service ... --force, confirms the deletion is initiated, and proactively cleans up the stale SSH host key entry from ~/.ssh/known_hosts so the next time the IP gets recycled, SSH doesn't fail with a key mismatch.

That last detail is the tell. A demo agent provisions and forgets. A production agent thinks about the next time someone uses the same IP. Sautéed for 25 seconds and the project is empty again.

What this actually changes

The catalog of 400+ open-source services on Elestio is not the moat. Lots of platforms have catalogs. The moat is that the AI agent owns the entire lifecycle on the same primitives: provision, SSH in, diagnose, recommend, clean up.

Most "AI for infra" stories stop at provisioning. The agent calls one Terraform plan, hands back to the human, and the human runs the rest in a terminal. Here the agent never hands back. Stack deployed: Supabase (Postgres + Auth + Realtime + Storage + Kong + Studio, 13 containers). Provider: Netcup, Nuremberg, EU sovereign region. Plan: 2 vCPU / 4 GB RAM / 60 GB SSD. Hardware: AMD EPYC 9645. Time to live: under 8 minutes. Agent: Claude Code, Opus 4.7, 1M context.

Behind it: 9 cloud providers (AWS, GCP, Azure, Hetzner, OVH, Scaleway, DigitalOcean, Vultr, Linode + Netcup), 400+ managed services, 12+ AI coding agents wired in (Claude Code, Codex, Cursor, Copilot, Windsurf, Cline, Devin, OpenCode, and more).

If your platform's API is awkward enough that an agent has to escalate halfway through, you don't have an agent-native platform. You have a platform that an agent occasionally talks to. Those are different things.

Try it yourself

Tell your agent to read https://elest.io/agents and install the Elestio skill. That is the entire setup. Once the skill is loaded, your agent gets the same primitives this demo used: provision, SSH, diagnose, tear down. Eight words later, you have a stack.

Thanks for reading ❤️